How to Build Risk-Centric AI Workflows for Legal Document Review

Learn how to design risk-centric AI document workflows for legal and compliance teams, including preprocessing, validation layers, and audit-ready controls.

AI is moving quickly into legal and compliance workflows, but in regulated environments, speed is not the constraint. Trust is.

Legal and compliance teams don’t just process documents; they interpret evidence. Contracts, regulatory filings, audit records, and internal communications all carry legal weight. If AI touches these documents, every output must be explainable, traceable, and defensible.

This is where many AI and traditional data extracttion approaches fall short. They focus on extraction (getting data out of documents) but not on validating whether that data is accurate, complete, and compliant before it’s used. That gap introduces risk in subtle but dangerous ways: privileged documents can be misclassified, regulatory clauses can be missed, and outputs can become impossible to defend during an audit.

In regulated enterprises, these aren’t technical issues. They are business risks.

This blog lays out a practical, risk-first blueprint for designing AI-powered document workflows in legal and compliance environments. It covers how to define risk boundaries, design decision checkpoints, implement human oversight patterns, and build audit-ready systems that can stand up to regulatory scrutiny.

The goal is not just to automate document review, but to make it trustworthy at scale.

A risk-centric AI document workflow is a document processing system designed to apply different levels of automation, validation, and human oversight based on the risk level of each document or decision. In legal and compliance environments, this ensures that high-risk documents are validated and auditable before AI outputs are used.

Before designing any workflow, organizations need a clear understanding of what “risk” actually means in their document ecosystem. Without that, automation decisions become arbitrary, and often unsafe.

In legal and compliance contexts, risk typically spans four interconnected dimensions:

These categories directly influence how much automation is acceptable for a given document.

Not every document should be treated the same. A marketing contract and a regulatory submission may both be “documents,” but the consequences of error are fundamentally different. That distinction must be reflected in workflow design.

A practical way to operationalize this is through a likelihood-versus-impact model. Low-risk, high-volume documents can move toward full automation. High-impact documents, even if rare, require stricter controls, including validation layers and human oversight.

This classification step becomes the foundation for everything that follows. It determines where automation is appropriate, where controls are required, and where human expertise must remain central.

A risk-focused AI workflow is not defined by a single model or tool. It is a coordinated system of steps designed to ensure that documents are not only processed, but trusted.

The workflow begins with ingestion, but in regulated environments, ingestion must capture more than just the document itself. It must preserve context.

This includes where the document came from, who created it, when it was modified, and how it has moved through systems. That lineage becomes critical when decisions need to be audited or defended.

When documents are treated as evidence rather than simple inputs, provenance is mission-critical. Without it, even accurate outputs can become unusable because they cannot be traced or verified.

Before AI is applied, documents must be normalized and prepared. This is where many workflows struggle, and where the difference between “AI that works in demos” and “AI that works in production” becomes clear.

Unstructured documents are messy by nature. They arrive as low-quality scans, handwritten forms, multi-layer PDFs, or images with inconsistent formatting. When these documents are passed directly into a large language model without preparation, the model is forced to interpret ambiguity. And when models interpret ambiguity, they fill in gaps.... often incorrectly.

Document preprocessing is the step where unstructured files are cleaned, standardized, and made machine-readable before AI extraction. It determines whether AI systems interpret documents accurately, or introduce errors.

Choosing a model is only part of the equation. In legal workflows, what matters more is how outputs are validated.

Traditional metrics like accuracy or F1 score are not sufficient on their own. Teams need to understand how models behave across specific legal use cases, such as clause extraction, entity recognition, or compliance validation, and where they fail.

More importantly, outputs must be validated against business and regulatory rules before they are accepted. This is where a dedicated accuracy and validation layer becomes essential. Instead of trusting model outputs by default, the system verifies them, flags inconsistencies, and prevents unreliable data from moving forward.

The shift from extraction to validation is what separates experimental AI from production-grade systems in regulated environments. An AI validation layer verifies extracted data against rules, confidence thresholds, and multiple model outputs before it reaches downstream systems.

Once data is extracted and validated, decisions must be routed appropriately. Not every output should follow the same path.

This routing is not static. It is driven dynamically by confidence thresholds, document classification, and policy rules. When designed correctly, it allows organizations to scale automation without compromising control.

Human oversight should not be treated as a fallback, but as a core design element.

In high-risk scenarios, workflows often begin with a review-first model, where human validation is required before any output is finalized. As confidence increases, teams may adopt a suggest-and-accept approach, where AI proposes decisions but humans remain accountable for approval. In complex or ambiguous cases, adjudication workflows ensure that experts resolve discrepancies before outcomes are finalized.

Modern platforms enable this by triggering human review based on thresholds or business rules, ensuring that attention is applied where it matters most.

Every decision made by the system must be reconstructable.

This means capturing not only the final output, but also the original document, the extracted data, the model’s confidence, the validation rules applied, and any human interventions. Together, these elements form an evidence package that can be used for audits, investigations, or regulatory inquiries.

Without this level of traceability, even well-performing systems can fail under scrutiny.

A simple head-to-head LLM document processing experiment makes this visible.

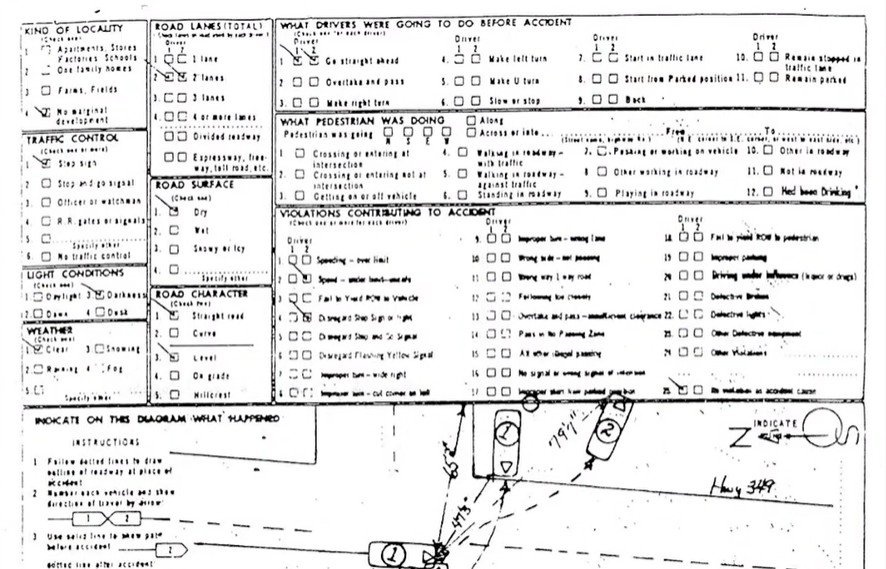

In this example, both systems are given the same document: a low-quality, scanned police accident report with handwritten notes and a diagram, exactly the kind of real-world input that tends to break AI. Both are asked to extract the same set of fields, including traffic control type, weather conditions, road surface, a diagram summary, handwritten narrative, and investigation location.

One approach sends the raw document directly to a single LLM.

The other applies preprocessing first (cleaning the image, standardizing structure, and preparing the content) before passing it through multiple models with validation.

You can watch the full example here.

The difference in outcomes is not subtle.

The single-model approach returns confident answers, but several are wrong. It misidentifies the investigation location and introduces details that don’t exist in the document, including hallucinated street names in the accident summary. Even the handwritten section is partially invented, despite high confidence scores.

By contrast, the preprocessed, multi-model approach correctly extracts key fields like “stop sign,” “clear weather,” “dry road surface,” and “Memorial Hospital.” The summary reflects the actual diagram, and the handwritten narrative is faithfully transcribed.

Same document. Same prompt. Completely different level of trust.

This is the critical point: LLMs do not fail because they are weak, they fail because the inputs are unstructured and unvalidated.

Preprocessing resolves this by removing ambiguity before the model ever sees the document. It standardizes formats, improves readability, and preserves fidelity so that the model is working with a clean, consistent representation of the source material.

When combined with multi-model validation, this creates a system that doesn’t just extract data, it verifies it. Instead of relying on a single interpretation, outputs are cross-checked, scored, and validated before they move downstream.

The result is better accuracy, but more importantly it is trustworthy data that can be used in legal and compliance workflows without introducing hidden risk.

And in regulated environments, that distinction is everything.

Key takeaway: When LLMs process raw, unstructured documents, they often produce confident but incorrect outputs. Preprocessing and validation transform those same documents into reliable, auditable data.

Risk-focused AI compliance workflows rely on structured checkpoints that prevent errors from propagating downstream.

These checkpoints operate at multiple levels.

The effectiveness of these gates depends on how thresholds are defined and calibrated. If thresholds are too strict, workflows become inefficient and overloaded with manual work. If they are too lenient, risk slips through unnoticed.

Advanced approaches combine multiple signals, such as model confidence, validation results, and contextual rules, to determine whether an output can be trusted. This creates a more nuanced and reliable decision framework than relying on a single score.

When thresholds are not met, escalation paths must be clearly defined. Instead of relying on manual intervention, workflows should automatically route cases to legal counsel or compliance specialists based on predefined criteria.

A well-designed workflow behaves less like a linear pipeline and more like a decision system, continuously evaluating risk and adjusting the path accordingly.

Beyond the workflow itself, governance ensures that the system operates safely over time.

Access control is a foundational requirement. Role-based permissions and separation of duties prevent unauthorized actions and reduce the risk of internal errors. Every interaction with the system should be logged, creating a transparent record of who did what and when.

Versioning is equally important. Models, prompts, validation rules, and workflows all evolve, and those changes must be tracked. Without version control, it becomes impossible to reproduce results or explain past decisions.

Privacy and data security must also be embedded into the workflow. Legal documents often contain sensitive information that must be protected through encryption, controlled access, and appropriate retention policies. Automated redaction can further reduce risk by masking sensitive data before it is processed or shared.

Finally, rigorous testing ensures that the system performs reliably under real-world conditions. This includes stress testing, adversarial scenarios, and regression testing to detect performance drift after updates.

Deploying an AI workflow is the beginning of ongoing assurance.

Performance must be monitored continuously, with metrics that reflect both technical accuracy and business impact. Precision and recall are important, but they must be interpreted in the context of risk. A false negative in a low-risk scenario may be acceptable; in a regulatory filing, it is not.

Over time, models and data distributions change. Drift detection and periodic revalidation help ensure that performance remains consistent. Sampling strategies can provide additional oversight, allowing teams to review a subset of outputs for quality assurance.

Operational dashboards play a key role in this process. They provide visibility into exception rates, processing times, and system health, while enabling real-time alerts when thresholds are breached.

When accuracy signals are directly connected to workflow automation, organizations can reduce manual intervention while maintaining control, improving both efficiency and trust.

Even well-designed systems encounter issues. What matters is how those issues are handled.

Effective organizations prepare for incidents in advance, with predefined playbooks for scenarios such as misclassification, data leakage, or regulatory inquiries. These playbooks ensure that responses are consistent, timely, and aligned with compliance requirements.

When an incident occurs, the system must support rapid evidence collection. This includes retrieving all relevant data, decisions, and logs associated with the event. Clear documentation of what happened, why it happened, and how it was addressed is essential for both internal review and external audits.

Post-incident analysis then identifies root causes and informs corrective actions, whether that involves updating models, refining validation rules, or adjusting workflow thresholds.

This cycle of detection, response, and improvement is what enables continuous risk reduction.

To operationalize these practices, teams rely on a consistent set of artifacts that standardize how workflows are designed and governed.

These typically include risk classification frameworks, escalation procedures, audit evidence templates, and model validation documentation. Together, they ensure that processes are repeatable, auditable, and aligned across teams.

Without these artifacts, governance becomes inconsistent, and risk increases.

The biggest misconception in compliance AI is that better models will solve risk.

They won’t.

Risk is introduced upstream, through inconsistent document quality, missing validation, and lack of control over how data flows into AI systems. Most extraction solutions focus on data extraction, but extraction alone does not create trust. It simply moves data faster, whether it is correct or not.

Adlib addresses this gap by acting as the AI accuracy and trust layer in front of core systems, LLMs, and automation systems. By transforming unstructured documents into validated, auditable, and AI-ready outputs, it ensures that downstream systems operate on data that can be trusted.

The primary risks include misclassification of privileged content, inaccurate extraction of regulatory data, lack of auditability, and inability to defend AI-driven decisions during audits or legal proceedings.

LLMs hallucinate in document workflows because they attempt to interpret incomplete or low-quality inputs. When documents are unstructured or ambiguous, the model fills gaps with plausible, but incorrect information.

Document AI becomes trustworthy when outputs are validated, traceable, and auditable. This requires preprocessing, multi-model validation, and clear audit trails, not just extraction.

A HITL workflow integrates human review into AI processes, ensuring that outputs are validated or approved by experts, especially in high-risk or low-confidence scenarios.

Extraction retrieves data, but validation ensures that the data is accurate, complete, and compliant. In regulated environments, unvalidated data introduces significant legal and operational risk.

By classifying documents based on risk (impact and likelihood) and aligning automation levels accordingly, from full automation for low-risk tasks to strict human oversight for high-risk scenarios.

Adlib ensures documents are normalized, validated, and structured before reaching AI systems, creating audit-ready, high-trust outputs that reduce risk and improve downstream decision-making.

Take the next step with Adlib to streamline workflows, reduce risk, and scale with confidence.